Princeton Senior Thesis: "Rethinking the Hype of Transformer Models: Examples from Electric Load Forecasting"

Abstract: As the electric grid experiences increased stress over time, having accurate load forecasting models is becoming a persistent challenge. This thesis emphasizes the quest for an accurate, general, and scalable load forecasting model that can be deployed across a wide variety of energy landscapes. Current production-level forecasting solutions are highly customized, incorporating detailed, region-specific insights into the model architectures. While effective, these solutions are quite complicated and do not easily scale to other regions.

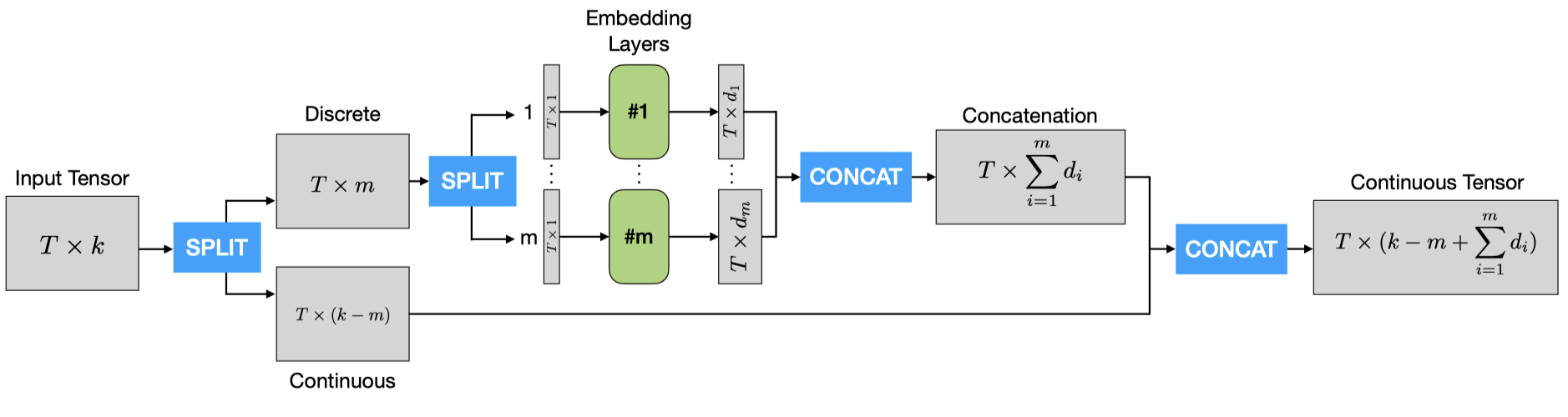

Originally introduced in 2017 for natural language processing, transformers — the key component of large-language models such as ChatGPT — have proven to be useful in a range of fields. This thesis aims to explore whether transformers are actually effective as a short-term load forecasting technology. We first develop a specialized transformer-based model that uses previous loads, as well as weather and temporal variables, to predict future loads. We then train and evaluate this model on new data, benchmarking its performance against that of various simpler models. Despite the broad success of transformers in numerous fields, we find that the transformerbased model is surprisingly outperformed by a very simple linear neural network, challenging the perception of transformers as a highly performant sequence modeling architecture, at least when it comes to short sequence time-series problems.

PytorchTransformersPython